What synthetic patient data quietly breaks in clinical AI

*Based on an article published in Forbes Tech Council (May 2023)*

Synthetic patient data looks like an elegant answer to a hard problem. Healthcare AI teams need data. Real patient data is protected by HIPAA, GDPR, and a patchwork of national regimes; acquiring, de-identifying, and sharing it is slow and expensive. Synthetic data promises a way around that — generate patients who never existed, train on them instead, ship a model. Gartner has forecast that the majority of AI training data will be synthetic within a few years, and a vendor ecosystem has grown up to meet the demand ([Preprints.org review, 2025](https://www.preprints.org/manuscript/202507.2567/v1)).

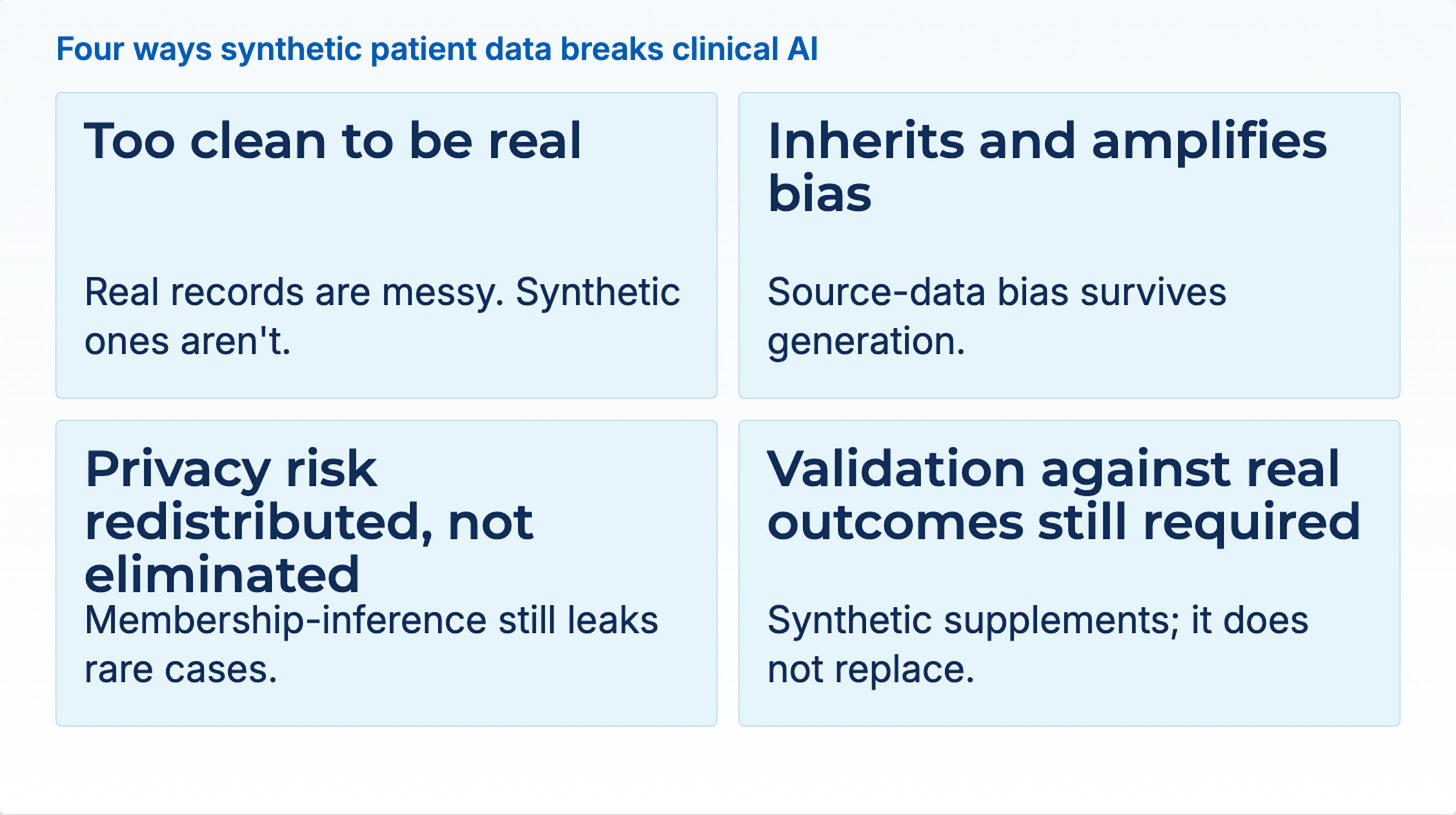

The elegance hides a set of real problems. Synthetic data is useful for specific things and dangerous for others. Teams that do not know the difference are shipping clinical models trained on data that systematically misrepresents the patients the model will eventually see — and that failure mode is not visible in any benchmark until something goes wrong in production.

Why synthetic data looks so attractive

The case for synthetic data is straightforward. Patient privacy regulations restrict data sharing. De-identification is technically difficult, legally fraught, and expensive to do at scale. Rare diseases and small subpopulations are systematically under-represented in real data, which limits the accuracy of AI models built to diagnose or serve them. Synthetic datasets appear to solve all three: no real patients means no privacy risk, no de-identification overhead, and — in theory — the ability to oversample rare cohorts to balance the training set.

The approach has genuine uses. Synthetic data is valuable for software testing, where you need realistic-looking records to exercise data pipelines without exposing PHI. It is useful for demos, training exercises, and teaching environments. It can help validate data-integration workflows before real data is loaded. For infrastructure, it is a reasonable substitute for the real thing.

The problems start when synthetic data is used to train or validate models that will then make decisions about real patients.

Problem 1: synthetic data is too clean

Real patient records are messy in specific, consequential ways. Lab values are missing because the test was never ordered or because the sample was hemolyzed and rejected. Medications appear with typos, brand-name and generic-name variants, and dosages that disagree between the med list and the clinical note. Diagnoses are documented once and never reconciled across subsequent encounters. Clinical notes contain contradictions, hedged language, and the trail of reasoning that preceded a provisional diagnosis being changed.

Generative models trained to produce synthetic records optimize for statistical plausibility, not for this kind of messiness. The resulting data looks normal. It passes visual inspection. It trains a model that works beautifully on more synthetic data — and then fails on the real thing, because the real thing is nothing like what the model was trained to handle.

A recent systematic review in *The Lancet Digital Health* put the problem in clinical terms: synthetic data generators frequently fail to preserve the complex interactions between variables that matter for outcomes, such as how obesity and socioeconomic status jointly influence diabetes severity ([Synthetic data, synthetic trust, 2025](https://pmc.ncbi.nlm.nih.gov/articles/PMC12778113/)). When those relationships break down in the training data, predictive models systematically underestimate risk for vulnerable populations — precisely the populations that motivated the move to synthetic data in the first place.

Problem 2: the biases come along for the ride

Generative models are only as good as the data they are trained on, and every bias in the source data is reproduced — often amplified — in the synthetic data derived from it.

This is easy to see once you trace the data provenance. A hospital in a wealthy U.S. metro serves a patient population with specific demographic, socioeconomic, and clinical patterns. A synthetic generator trained on that hospital’s data will produce synthetic patients who look like those patterns: the right age distributions, the right disease prevalences, the right medication histories, the right income and insurance mixes. Ship the generator to another team; they train a model on synthetic data that preserves those patterns; the model then deploys in a rural clinic, a safety-net hospital, or an international setting, and underperforms in ways no one sees coming.

Peer-reviewed work has documented multiple mechanisms by which generative models amplify bias: distributional distortion in diffusion models, truncation-trick bias in GANs, and loss of rare-case representation across generations of iterative re-generation ([Shumailov et al., arXiv:2305.17493](https://arxiv.org/abs/2305.17493)). The authors of that paper coined the term “the curse of recursion” for the observation that models trained on generated data progressively forget the tails of the original distribution — the exact patients, rare presentations, and unusual combinations of conditions that a clinical AI needs to identify correctly.

Advocates point out that synthetic data can correct bias if you actively design the generator to do so. This is true. It is also a substantially harder engineering problem than “generate a statistically plausible version of our existing data,” and teams routinely conflate the two.

Problem 3: privacy risk is not eliminated, it is redistributed

The third pitch for synthetic data is privacy — no real patients, no risk. In practice, synthetic data carries privacy risks of its own, just in different forms.

Peer-reviewed work has shown that synthetic datasets can leak identifiable information about the individuals in the source data, either through membership-inference attacks or through direct reproduction of rare combinations of attributes that appear in only one real patient. The European Medicines Agency, *Nature*, and *PNAS* have all published on the risk ([Nature, 2025](https://www.nature.com/articles/d41586-025-02869-0); [PNAS, 2024](https://www.pnas.org/doi/10.1073/pnas.2414310121)). As with de-identification, the problem is hardest for exactly the cases synthetic data is supposed to help with: rare diseases, unusual presentations, and tail-of-distribution patients whose profile is close enough to unique that a generator cannot mask them while preserving their clinical reality.

There is also a research-ethics dimension that has quietly emerged. *Nature* reported in 2025 that some institutions have waived ethical review for research conducted on synthetic data, on the reasoning that no real humans are involved. The reasoning is wrong. Synthetic data derives from real human data, and the insights it produces affect real human patients when those insights are used to build clinical AI. The privacy layer may have moved; the ethical responsibility has not.

Problem 4: validation against real outcomes is still required

Even synthetic data that preserves statistical patterns well does not tell you how the model built on it will perform on real patients. That requires validation against real-world outcomes, which requires real-world data — the very thing synthetic data was supposed to let you skip.

This is the point regulators have been clearest on. The UK Medicines and Healthcare products Regulatory Agency, the FDA, and the EMA all require that clinical AI models used in regulated contexts be validated against real patient data before deployment. Synthetic data can supplement that validation and support model development; it cannot replace it. A recent *ScienceDirect* review of synthetic data in laboratory medicine put it directly: any insight derived from synthetic data must be rigorously validated against real-world outcomes before clinical implementation ([Synthetic data in the clinical laboratory, 2026](https://www.sciencedirect.com/science/article/pii/S0009898126000604)).

When to use synthetic data — and when not to

After building healthcare AI systems for a decade, my rule of thumb is straightforward. Use synthetic data when the goal is non-clinical: testing a data pipeline, exercising a privacy-preserving ETL, building a demo environment, training data scientists who are not yet cleared for PHI access, or validating an integration. In those contexts, synthetic data is often the right answer and carries few downsides.

Do not use synthetic data as the training or validation substrate for a clinical model that will eventually make decisions about real patients. Even the best synthetic data is downstream of real data and inherits its biases, its blind spots, and its privacy risks. A model trained on it may pass its synthetic benchmarks and fail its first real patient.

The alternative is not to give up on privacy protection. The alternative is to do the harder, more valuable work on real data: regulatory-grade de-identification that meets HIPAA Expert Determination, not just Safe Harbor; de-identification pipelines that handle clinical notes, PDFs, DICOM images, and structured records uniformly; federated or in-environment training that never moves data across organizational boundaries; auditable data provenance so that anyone who needs to can see where every training example came from.

This is where our team at John Snow Labs has put most of our investment over the years. Our de-identification pipeline achieves 96% F1 on PHI detection on peer-reviewed benchmarks — compared with 91% for Azure’s clinical NLP, 83% for AWS Comprehend Medical, and 79% for GPT-4o on the same evaluation — and runs inside the customer’s environment. We de-identified roughly 2 billion clinical notes at Providence under HIPAA Expert Determination, red-teamed by an independent third party, with zero confirmed re-identifications ([johnsnowlabs.com/case-studies](https://www.johnsnowlabs.com/case-studies/)). The real answer to the data-access problem is not generating fake patients well enough to fool your own model. It is handling real patient data well enough that you can use it safely.

Key takeaways

Synthetic patient data is a useful tool for non-clinical work: pipeline testing, demos, training exercises, integration validation. It is a poor training and validation substrate for clinical AI, because it tends to be too clean, inherits and often amplifies the biases of its source data, carries privacy risks of its own, and cannot be used to validate performance against real outcomes. The better path for healthcare organizations that want to build AI on patient data safely is regulatory-grade de-identification, in-environment processing, and auditable provenance on real data — not a synthetic layer that looks cleaner but hides the same problems and adds new ones.

FAQ

Is all synthetic patient data problematic for healthcare AI?

No. Synthetic data is fine — often ideal — for purposes that do not involve training or validating clinical models. Pipeline testing, user demonstrations, integration work, classroom exercises, and pre-production ETL are all appropriate uses. The problems arise when synthetic data becomes the basis on which a model that will affect real patients is trained or validated.

Doesn’t synthetic data solve the privacy problem?

It moves the problem rather than solving it. Synthetic datasets can still leak information about the individuals in the source data through membership-inference attacks, and rare-case patients are particularly at risk because their clinical profile is often close to unique. Institutions should treat synthetic data as deriving from real patients and apply appropriate oversight accordingly.

Why does synthetic data tend to be “too clean”?

Generative models optimize for statistical plausibility, not for the specific messiness of real clinical records — missing labs, typo’d medications, contradictory notes, unreconciled diagnoses. Models trained on the output perform well on synthetic test data and worse on real data, because they never learned to handle the noise that dominates real clinical work.

Can well-engineered synthetic data correct bias in the source data?

In principle yes, but this requires deliberate engineering of the generator to over-represent under-represented cohorts, not just a statistically faithful copy. Most off-the-shelf synthetic-data workflows do the latter and therefore preserve or amplify source-data biases. The “we’ll correct for bias with synthetic data” argument is real engineering work, not a property of synthetic data as such.

What about Synthea, MDClone, and other established synthetic-data systems?

Tools like Synthea generate patients from publicly available health statistics and clinical guidelines, which is well-suited to its actual use cases — software testing, teaching, research prototyping. MDClone-style tools that derive synthetic versions from real hospital data are useful for certain research purposes. Neither was designed as a replacement for real-data training and validation in regulated clinical AI, and teams should be cautious about using them that way.

What should organizations do instead?

Invest in regulatory-grade de-identification that meets HIPAA Expert Determination, handles structured and unstructured data uniformly, and runs inside your environment. Combine that with in-environment model training so data never leaves your control, and auditable provenance so every training example can be traced back to a real source. That is the path that scales, meets regulatory expectations, and produces models that actually work on real patients.

How do regulators view synthetic data in clinical AI validation?

The FDA, EMA, and UK MHRA all currently require validation of clinical AI models against real patient data before regulatory approval. Synthetic data can supplement model development and testing, but it does not replace the real-world evidence step. Any vendor or team that tells you otherwise is not reading the current regulatory guidance accurately.

---

David Talby is CEO of John Snow Labs, a healthcare AI company whose de-identification, medical NLP, and Medical LLM technology is used by 500+ healthcare and life sciences organizations. The Providence 2-billion-note de-identification project referenced here is described in a public case study at [johnsnowlabs.com/case-studies](https://www.johnsnowlabs.com/case-studies/).