Why the FDA chose NLP to close a blind spot in post-market drug safety

*Originally covered in FedScoop, Enterprise AI News, and Pharmaceutical Technology — April 2023*

The FDA’s Sentinel Initiative monitors the safety of drugs used by rou

ghly 138 million people in the United States. It is the largest active post-market surveillance system in the world, and since 2016 it has informed more than 120 regulatory decisions, including label changes on hydrochlorothiazide, beta-blockers, and other commonly prescribed medications. Sentinel works. It also has a structural gap that insurance claims alone cannot close — and in April 2023, the FDA chose Cerner Enviza (an Oracle company) and John Snow Labs to help close it using natural language processing on clinical notes.

The blind spot Sentinel has always had

Sentinel’s backbone is insurance claims data. Claims are excellent for what they capture: prescriptions dispensed, procedures billed, diagnosis codes submitted, hospitalizations recorded. They cover clearly defined enrollment periods and are close to complete for the events a payer pays for.

They are also missing most of what a clinician actually writes down. Symptom descriptions, suspected adverse drug reactions that never become a formal diagnosis, patient-reported side effects, medication adherence notes, lifestyle and social context, the reasoning behind a treatment change — these live in unstructured clinical notes, not in claims fields. Research in *npj Digital Medicine* has shown that the most frequently cited reasons analysts cannot use the current claims-based Sentinel for a given safety question are the lack of clinical detail needed to identify outcomes accurately, missing or inaccurate measures of confounders, and the absence of computable phenotyping algorithms precise enough for the study population ([Desai et al., 2021](https://www.nature.com/articles/s41746-021-00542-0)).

Congress recognized the gap. The 21st Century Cures Act and subsequent directives required the FDA to expand Sentinel’s infrastructure to include electronic health record data on at least 10 million lives. The FDA’s Innovation Center stood up a Real-World Evidence Data Enterprise linking EHRs to claims for more than 25 million patients across commercial and academic partners ([Maro et al., *Clinical Pharmacology & Therapeutics*, 2023](https://pubmed.ncbi.nlm.nih.gov/39385712/)).

Getting the data is the easy part. Making it usable is the harder one — because the most valuable fields in an EHR are free text.

Why manual chart review does not scale to a population

Post-market surveillance operates at a scale where a human reviewer is a bottleneck, not a solution. Consider a signal-identification study of a drug used by a million patients: even if suspected adverse events show up in the notes of only 5% of those patients, that is 50,000 charts to review, with several relevant notes per chart. A team of ten experienced reviewers working at the typical rate of 20 to 30 charts per day would need several years to finish a single study. By then the signal is either long-confirmed from another source or the drug has been on the market long enough that the safety question has shifted.

The result is that safety investigations that require clinical detail either get rescoped down to what claims alone can answer, or they get dropped. Both outcomes are bad. Rescoping loses the granularity of the question. Dropping means the agency waits longer for a signal it could have caught.

This is the bottleneck that NLP is built to remove. Adverse event mentions, negation context, temporal relationships to drug exposure, symptom severity — these are standard outputs of modern clinical NLP pipelines. What has held the technology back from Sentinel is not the algorithms. It is regulatory-grade accuracy at production scale, and the governance required for an FDA program.

What the FDA actually chose

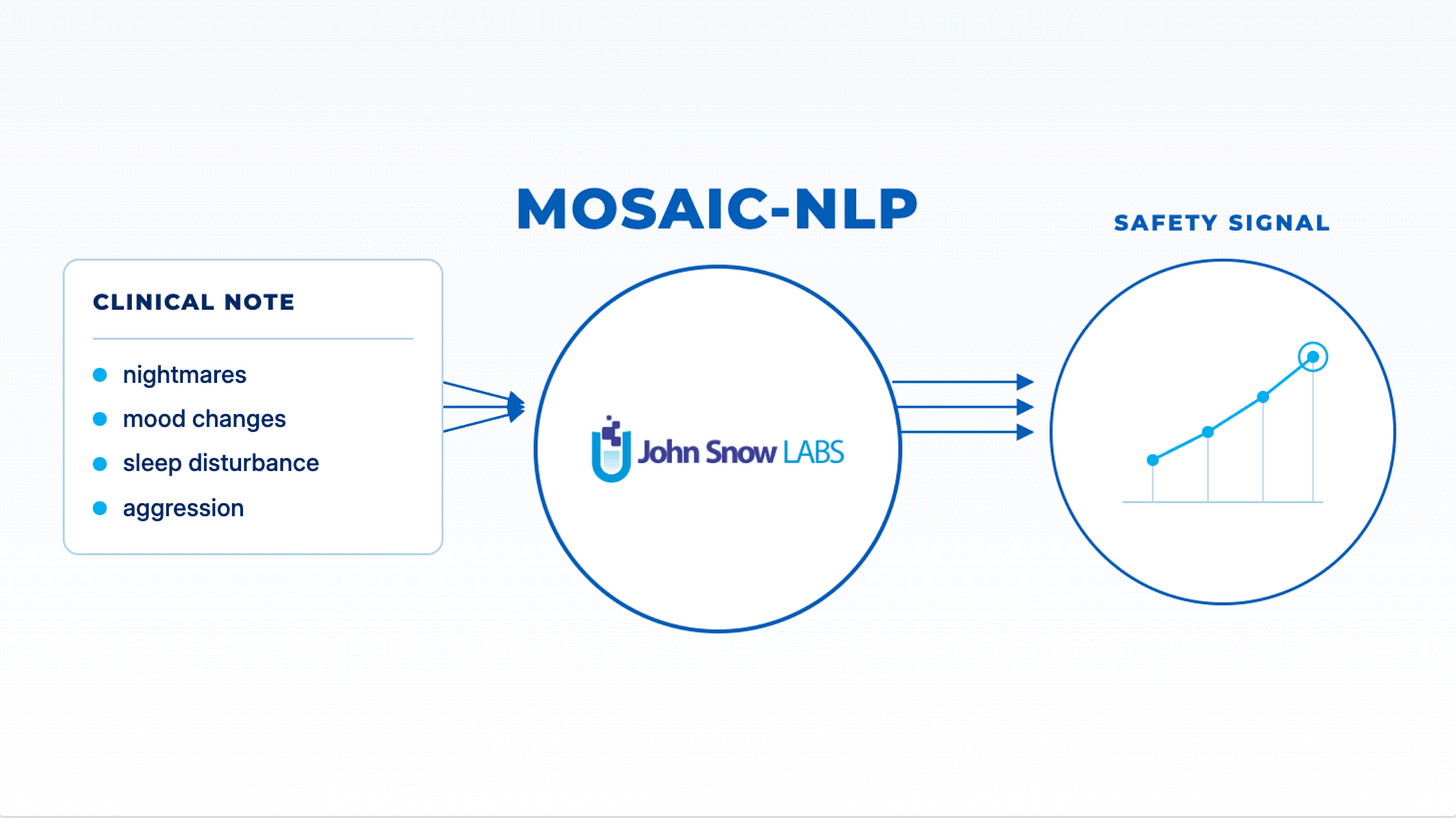

The project is called MOSAIC-NLP — Multi-source Observational Safety Study for Advanced Information Classification Using NLP models. It is a two-year effort under the Sentinel Innovation Center, with Cerner Enviza contributing the EHR and life-sciences research platform and John Snow Labs contributing the clinical NLP. Children’s Hospital of Orange County, National Jewish Health, and Kaiser Permanente Washington Health Research Institute are providing clinical expertise and access to real-world data.

The first use case is deliberately hard: montelukast. The asthma drug carries an FDA boxed warning for neuropsychiatric side effects including mood changes, sleep disturbance, and suicidal ideation. These events are the kind of signal that is frequently documented in clinical notes — a parent tells the pediatrician that a child has been having nightmares or sudden mood swings since starting the medication — but rarely shows up as a billed diagnosis. If claims alone could answer the question, the FDA would not have needed to add the box warning only after years of post-market accumulation.

The technical work is straightforward in outline and unforgiving in execution. Pipelines must extract drug exposures, adverse events, symptoms, severity, negation, and temporal relationships from notes written by thousands of different clinicians across different institutions. They must run at the scale of tens of millions of documents. They must do it under HIPAA Safe Harbor or Expert Determination, with no patient data leaving the institutions’ secure environments. And the outputs must be auditable and reproducible, because they will contribute to regulatory decisions.

Why this is a credibility event for clinical NLP

For those of us who have been building medical language models, the MOSAIC-NLP selection is worth pausing on. The FDA picks its Sentinel partners carefully. The agency’s Innovation Center has been explicit that moving NLP into routine pharmacovigilance is a strategic priority, and that any system in that role has to meet the same bar of methodological rigor that the rest of Sentinel meets — peer-reviewed methods, reproducible analyses, distributed data governance, validated phenotypes.

Two things about the 2023 announcement signal where the field is going. First, the FDA selected partners whose NLP is already deployed at scale in other healthcare settings rather than a research prototype. Spark NLP for Healthcare is in production at health systems and pharma companies whose models need to run on billions of notes without sending data to an external API. Second, the program is not an exploratory study. It is a production evaluation designed to inform how the FDA routinely uses unstructured data in future safety investigations.

For those evaluating NLP for regulated healthcare workflows, three questions are now effectively settled at the agency level, and anyone procuring this technology should expect to answer them: Does the pipeline run inside your environment, with no data leaving it? Are the underlying models published, peer-reviewed, and independently validated on clinical benchmarks? Can the entire pipeline’s outputs be reproduced and audited years later? If any of those answers is “no,” the pipeline is not ready for regulated work.

What MOSAIC-NLP tells us about where real-world evidence is heading

The deeper story here is not about one asthma drug. It is about what real-world evidence looks like when it no longer depends on structured claims alone.

Claims-based Sentinel analyses can tell you that a patient was prescribed a drug, filled it, and later had an emergency room visit. They cannot tell you whether the patient’s mood changed, whether the dose was adjusted, whether a family member reported the change, or whether the clinician attributed the event to the drug and documented that attribution. All of that context sits in free text, and all of it changes what a pharmacoepidemiologist can and cannot conclude.

The Sentinel Innovation Center has laid out four strategic priorities to close this gap: data infrastructure that links EHRs with claims, feature engineering to turn notes into analyzable variables, causal inference methods that account for the new sources of bias these data introduce, and detection analytics that flag signals sooner. NLP is a prerequisite for the middle two. Without reliable extraction of adverse events, severity, negation, and temporality, the statistical methods downstream have nothing new to work with.

The direction is clear. The agency’s recent 2022-to-2024 Sentinel assessment notes that NLP-supported signal identification is being folded into routine pharmacovigilance activities ([FDA Sentinel System Assessment, 2025](https://www.fda.gov/media/189028/download)). Life sciences sponsors whose post-approval commitments include Sentinel-ready analyses will face the same expectations. The pharmaceutical companies already planning their next generation of post-marketing safety programs are asking the same three procurement questions the FDA is — accuracy on clinical benchmarks, in-environment deployment, reproducibility — and building their vendor evaluations around the answers.

Key takeaways

Post-market drug safety surveillance has lived inside claims data for most of its history because the technology to read clinical notes at scale was not ready. That is changing. The FDA’s selection of Cerner Enviza and John Snow Labs for MOSAIC-NLP is a concrete signal that production-grade clinical NLP is now considered reliable enough to contribute to regulatory decisions, starting with a drug whose most serious side effects show up in notes far more often than in claims.

For healthcare AI leaders, the takeaway is a procurement one. The same criteria the FDA is applying — peer-reviewed accuracy, in-environment deployment, reproducible outputs — are the criteria that should govern any clinical NLP procurement aimed at regulated work. The bar has moved. The technology has to meet it.

FAQ

What is the FDA Sentinel Initiative?

Sentinel is the FDA’s national post-market surveillance system for drugs, vaccines, biologics, and medical devices. It uses a distributed data network covering approximately 138 million people, primarily through insurance claims linked with a growing subset of electronic health records, and has informed more than 120 regulatory decisions since 2016.

Why is NLP being added to Sentinel now?

Claims data captures what was billed, not what was observed. Many adverse events — especially symptom-based events like mood changes, fatigue, or cognitive complaints — are documented in clinical notes but never reach a structured diagnosis code. NLP is the only scalable way to extract that information, and production-grade medical NLP has reached the accuracy and governance bar the FDA requires.

What is MOSAIC-NLP?

Multi-source Observational Safety Study for Advanced Information Classification Using NLP models — a two-year FDA Sentinel Innovation Center project with Cerner Enviza, John Snow Labs, Children’s Hospital of Orange County, National Jewish Health, and Kaiser Permanente Washington Health Research Institute. The first study evaluates neuropsychiatric side effects of montelukast.

Why montelukast as the first use case?

Montelukast carries an FDA boxed warning for neuropsychiatric side effects. These events are frequently documented in clinical notes but rarely coded as diagnoses, which makes them a strong test case for NLP-augmented signal identification and a question claims data alone cannot answer well.

What technical bar does NLP have to clear to be used in FDA regulatory work?

Three things, in my view. Peer-reviewed, independently validated accuracy on clinical benchmarks. Deployment inside the data owner’s secure environment with no patient data leaving — distributed data analytics is a core Sentinel principle. Fully reproducible and auditable outputs, because the results contribute to regulatory decisions that may be revisited years later.

Does this mean NLP will replace claims-based Sentinel analyses?

No. Claims data remains the backbone of Sentinel and is well-suited to the questions it was designed to answer. NLP extends Sentinel to questions claims alone cannot answer — questions where the clinical detail, symptom documentation, or temporal relationship to a drug exposure lives in the note text.

How should healthcare AI leaders interpret this announcement?**

It is the clearest signal yet that clinical NLP is moving from research into regulated production. If you are procuring NLP technology for pharmacovigilance, real-world evidence, or any clinical workflow that may be audited, ask the same questions the FDA is asking: peer-reviewed accuracy, in-environment deployment, reproducibility. Vendors who cannot answer all three are not ready for this work.